Confidential AI workflows with Privatemode and n8n

n8n users self-host workflows to keep sensitive data under control. However, when adding AI nodes, that data gets sent to OpenAI or Anthropic. Privatemode lets you use cloud-grade models in n8n while keeping all workflow data encrypted end-to-end.

Introduction

Add cloud-based AI to n8n workflows without exposing your data

AI-powered workflows need cloud models

Powerful n8n's AI nodes (AI Agent, Basic LLM Chain, Sentiment Analysis, Summarization Chain) rely on cloud LLM providers like OpenAI. Self-hosted n8n users face a dilemma: use powerful cloud models and lose data control, or stick with limited local models.

Privatemode bridges the gap

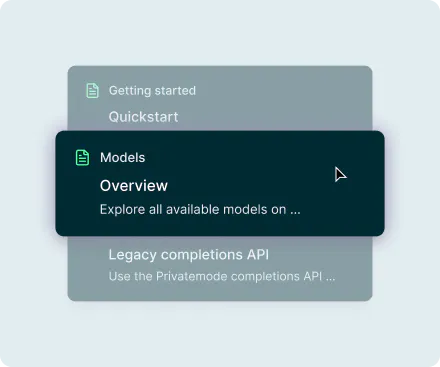

Privatemode provides an OpenAI-compatible API backed by state-of-the-art models running inside confidential computing environments. Point n8n's OpenAI Chat Model node at the Privatemode proxy and your workflow data stays encrypted, even during inference.

Encrypted at every step

Your workflow data is encrypted before it leaves your machine, processed inside a hardware-enforced enclave, and never stored or used for training. This is enforced by confidential computing hardware, not just policy.

Benefits

Why use Privatemode AI with n8n?

End-to-end encrypted AI workflows

ntegrating Privatemode into n8n brings cloud-AI capabilities to your automation workflows while keeping your data confidential. Every prompt, response, and intermediate result is encrypted through end-to-end encryption and confidential computing. Privatemode never learns from your data.

Works with all n8n AI nodes

Since Privatemode adheres to the OpenAI API specification, it works with n8n's OpenAI Chat Model node and every AI node that uses it: AI Agent, Basic LLM Chain, Sentiment Analysis, Summarization Chain, Text Classifier, and Q&A Chain.

Simple credential change

Just update the Base URL in your OpenAI Chat Model credentials to point to the Privatemode proxy. No workflow changes, no new nodes, no plugins needed. Existing workflows gain encryption instantly.

How to get started

Set up confidential agents in n8n in just a few steps

Get your API key

If you don't have a Privatemode API key yet, you can generate one for free here.

Run the Privatemode proxy

The proxy verifies the integrity of the Privatemode service using confidential computing-based remote attestation. The proxy also encrypts all data before sending and decrypts data it receives.

Set up an AI agent in n8n

Follow the official n8n tutorial to set up an AI agent. Since Privatemode adheres to the OpenAI interface specification, you can use the standard OpenAI Chat Model node as outlined in the tutorial. However, you’ll need to reconfigure the credentials used in Step 5 of the tutorial.

In addition to the AI Agent node, the OpenAI Chat Model can be used in several other powerful AI nodes, including:

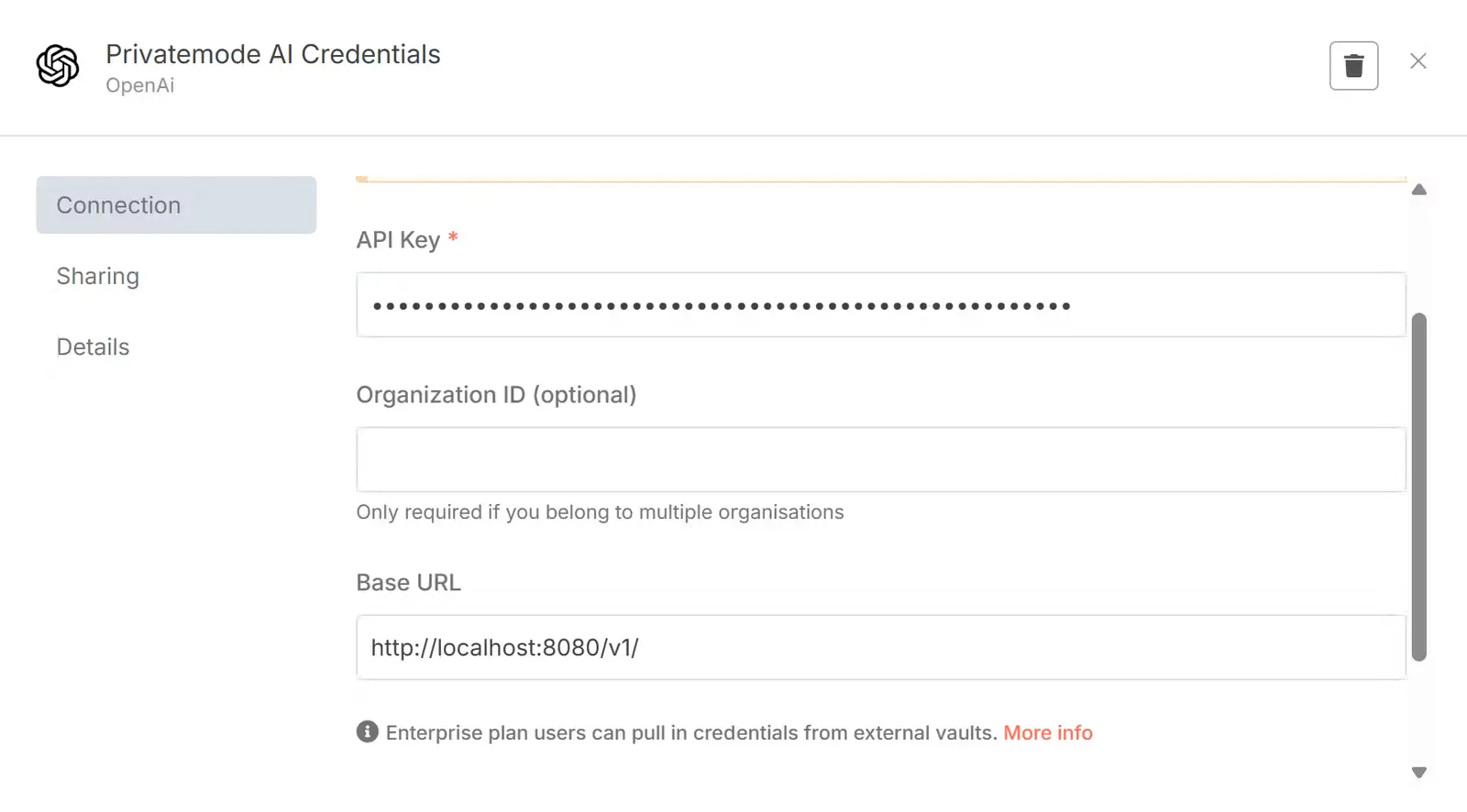

Adjust credentials

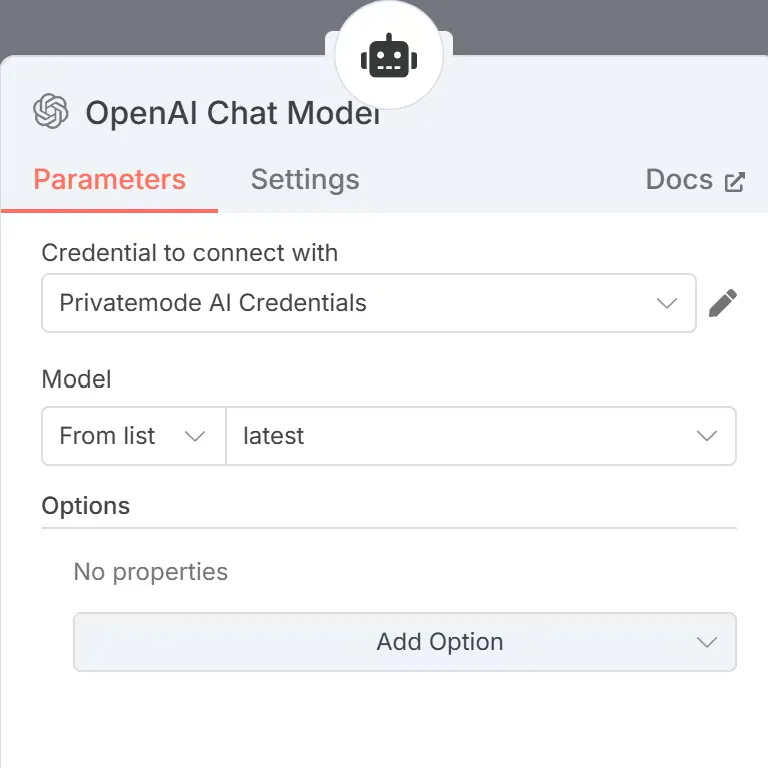

To ensure that all data is sent through the Privatemode proxy set the following credentials for your OpenAI Chat Model node:

- API Key: Set this to a placeholder value, such as ‘placeholder’. Authentication is handled by the proxy.

- Base URL: Set this to the /v1 route of your Privatemode proxy, for example, "http://localhost:8080/v1".

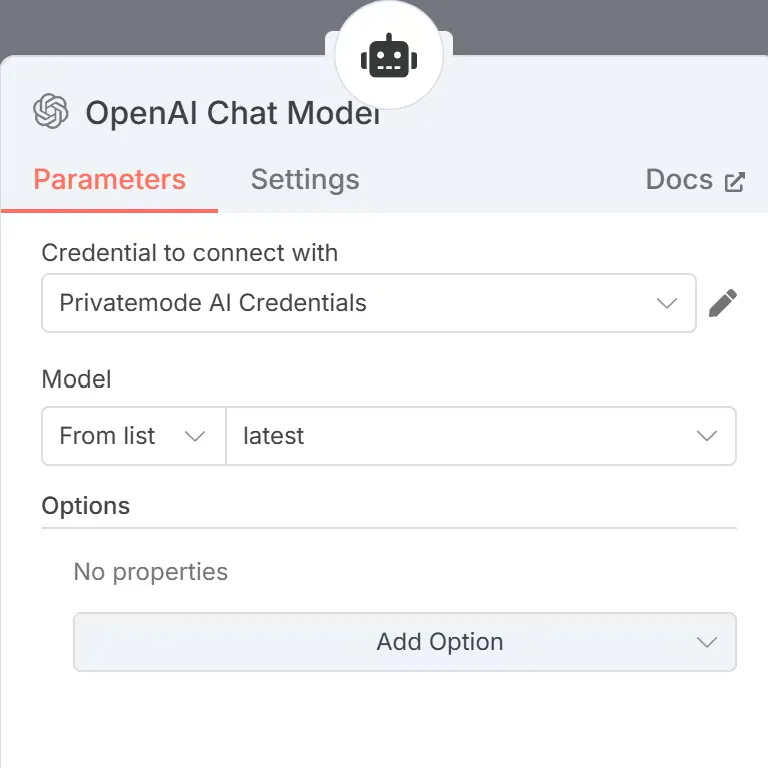

Set the parameters of the node

Set the parameters of the OpenAI Chat Model node to use the correct credentials and select the model you want to use.

Done!

You’ve successfully integrated Confidential AI into your workflow.

FAQ

Frequently asked questions about using Privatemode with n8n

No structural changes are needed. You reconfigure the OpenAI Chat Model node credentials to point to the Privatemode proxy by changing the Base URL field. All AI nodes that use this model, including AI Agent, Basic LLM Chain, and Summarization Chain, gain end-to-end encryption automatically.

OpenClaw

Run an open-source personal AI assistant across your messaging apps and keep your AI interaction encrypted end-to-end.

Open WebUI

Run Open WebUI as your team's self-hosted AI chat interface, with cloud-grade LLMs and full privacy.

Want to look for yourself?